Back to Browse

Free

Server data from the Official MCP Registry

Render 3D avatars from three.ws and validate, inspect, and optimize glTF/GLB models.

About

Render 3D avatars from three.ws and validate, inspect, and optimize glTF/GLB models.

Remote endpoints: streamable-http: https://three.ws/api/mcp

Security Report

10.0

Low Risk10.0Low RiskValid MCP server (1 strong, 1 medium validity signals). No known CVEs in dependencies. Imported from the Official MCP Registry.

Endpoint verified · Requires authentication · 1 issue found

Security scores are indicators to help you make informed decisions, not guarantees. Always review permissions before connecting any MCP server.

Permissions Required

This plugin requests these system permissions. Most are normal for its category.

How to Connect

Remote Plugin

No local installation needed. Your AI client connects to the remote endpoint directly.

Add this to your MCP configuration to connect:

{

"mcpServers": {

"io-github-nirholas-three-ws": {

"url": "https://three.ws/api/mcp"

}

}

}Documentation

View on GitHubFrom the project's GitHub README.

three.ws

https://github.com/user-attachments/assets/d52515d1-cb04-4dd6-98bd-fef233312dc4

Give your AI a body. three.ws is an open-source, browser-native 3D AI agent platform. Drop a GLB file, add an LLM brain, register on-chain, and embed anywhere — no plugins, no server uploads, no installs required.

Table of Contents

- What is three.ws?

- Vision

- Roadmap

- Key Features

- Screenshots

- Architecture

- Tech Stack

- Getting Started

- Examples

- Tutorials

- Project Structure

- The Agent System

- Web Component & Embedding

- Widget System

- API Reference

- Authentication & OAuth 2.1

- MCP Server

- On-Chain Identity (ERC-8004)

- Database Schema

- Build & Deployment

- Environment Variables

- Testing

- Contributing

- License

What is three.ws?

three.ws is a full-stack system for creating, deploying, and embedding 3D AI agents. It combines a WebGL model viewer, an LLM-driven agent runtime, on-chain identity contracts, and a distributable web component into one cohesive platform.

At its core, it does four things:

-

Render — loads and validates glTF 2.0 / GLB models in WebGL 2.0 with zero server-side processing. Drag a file onto the browser and it renders instantly with full Draco, KTX2, and Meshopt decompression.

-

Embody — wraps any avatar with an LLM brain. The agent listens to the user, thinks with Claude, executes tools (animations, gestures, memory operations, skill calls), and expresses emotion through morph-target blending on the 3D model in real time.

-

Register — optionally mints the agent as an ERC-8004 token on any EVM chain, giving it a stable on-chain identity, a wallet address, signed action history, and a reputation score that cannot be forged.

-

Embed — distributes the agent as an

<agent-3d>web component that anyone can drop into a page, or as one of five purpose-built widget types (turntable, animation gallery, talking agent, passport card, hotspot tour) with Open Graph and oEmbed support built in.

The backend is a set of Vercel serverless functions backed by Neon Postgres for metadata, Cloudflare R2 for model storage, and Upstash Redis for rate limiting. It exposes a full OAuth 2.1 authorization server and an MCP (Model Context Protocol) endpoint so external AI systems can drive avatars programmatically.

three.ws is production-ready and serves three.ws live. The entire stack — viewer, agent runtime, contracts, backend, and web component — is open source under Apache 2.0.

Vision

One day, creating your agent should be as simple as taking a selfie.

Point your camera at yourself — or anyone — and watch a fully realized 3D avatar emerge: your face, your voice, your personality, alive in the browser. That avatar becomes an agent with memory and skills, registered onchain as an ERC-8004 token, permanent and verifiable by anyone forever. No 3D software. No wallet setup. No uploads. Just a photo and a name.

This is the direction three.ws is heading: photo → avatar → agent → onchain identity, in a single flow. The infrastructure is already here — the viewer, the runtime, the contracts, the embedding layer. What comes next is closing the gap between a picture of a person and a living, ownable, embeddable piece of them that exists on the internet permanently.

Roadmap

three.ws ships in four phases. Each phase closes a specific gap between the current platform and the end-state vision: anyone can mint a 3D agent of themselves, own it onchain, and embed it anywhere on the internet.

| Phase | Theme | Status |

|---|---|---|

| 0 | Platform foundations (viewer, runtime, ERC-8004, embed layer) | ✅ Shipped |

| 1 | Selfie → Avatar engine (3-photo capture, hosted inference) | 🟡 In progress |

| 2 | Agent personalization + voice cloning | ⏳ Next |

| 3 | Onchain economy (agent tokens, reputation markets, royalties) | ⏳ Next |

| 4 | Open inference network (decentralized GPU layer) | 🔮 Future |

Phase 0 — Foundations (Shipped)

The full stack is live at three.ws: WebGL viewer, LLM agent runtime, ERC-8004 identity contracts, OAuth 2.1 server, MCP endpoint, and the <agent-3d> web component. Anyone can register an agent today — but the avatar still has to come from a 3D artist or a third-party tool.

What works: model upload, agent runtime, onchain registration, embedding, signed action history, reputation scores. What doesn't: there is no automated path from a real human face to a usable 3D avatar.

Phase 1 — Selfie → Avatar Engine

Goal: any user takes 3 selfies (left, center, right) and receives a rigged, animatable 3D avatar in under 60 seconds.

Deliverables

- Mobile-first capture UX with realtime quality gates (lighting, framing, blur)

- Multi-view face reconstruction pipeline (FLAME / 3DMM fitting on top of a base body mesh)

- Hosted inference workers (GPU-backed) for sub-minute generation

- Output written directly to R2 + minted as a draft ERC-8004 token

Compute requirements

- A100/H100-class GPUs for inference, sized to ~10k avatars/day at launch

- Training budget for fine-tuning a stylized face-fitter on a curated dataset

- CDN egress scaling for high-res GLB delivery

Verification: 1,000 test users complete capture and mint an onchain agent of themselves end-to-end with ≥4/5 likeness score.

Phase 2 — Agent Personalization

Goal: the avatar isn't just you — the agent acts like you.

Deliverables

- Voice cloning (3–10 seconds of speech → ElevenLabs custom voice bound to the agent)

- Persona extraction from a short onboarding interview (tone, vocabulary, interests)

- Memory seeding from connected accounts (X, GitHub, Farcaster) with explicit user consent

- Per-agent fine-tuned system prompt stored in the manifest, signed and pinned to IPFS

Verification: users return to converse with their own agent; ≥30% week-2 retention on minted agents.

Phase 3 — Onchain Economy

Goal: agents are real economic objects on EVM and Solana, not just collectibles.

Deliverables

- Agent tokens — ERC-8004 mints with bonding-curve pricing or fair launch options

- Reputation markets — stake on agents, earn from their action history (extends

ReputationRegistry.sol) - Skill royalties — skill authors earn per-call fees through EIP-7710 delegated permissions

- Agent-to-agent payments — agents transact autonomously via their delegated signer wallets

- Subscriptions & DCA — recurring onchain payments to creators (cron infra already in place)

Funding requirements

- Smart contract audits (multi-firm) for the reputation, royalty, and delegation contracts

- Liquidity for agent token launches

- Indexer infrastructure across Base, Solana, and additional EVM chains

Verification: ≥1,000 agents minted with active onchain reputation; ≥$X in cumulative skill royalties paid out.

Phase 4 — Open Inference Network

Goal: decouple agent inference from any single provider. Anyone can run a node; agents pay nodes onchain for compute.

Deliverables

- Open protocol for agent inference (model weights, GPU runtime, signed responses)

- Node operator client (Docker + GPU drivers) with onchain registration

- Onchain settlement for inference jobs — pay-per-token with cryptographic receipts

- Federation with existing decentralized compute networks where appropriate

Compute requirements

- Bootstrap GPU credits for early node operators

- Cryptoeconomic security model (slashing, validator set) — research + audit budget

Verification: ≥50% of production agent traffic served by independent node operators; latency parity with centralized inference.

What we need

| Resource | Used for | Phase |

|---|---|---|

| Inference GPUs | Avatar generation, agent conversations | 1, 2 |

| Training compute | Fine-tuned face-fitter, voice models | 1, 2 |

| Smart contract audits | Reputation, royalty, delegation contracts | 3 |

| Token launch liquidity | Agent token markets | 3 |

| Indexer infrastructure | Multi-chain crawl + reputation aggregation | 3 |

| Node operator credits | Bootstrap the open inference network | 4 |

| Engineering headcount | Capture pipeline, contracts, indexer, ops | 1–4 |

Phases 1 and 2 unblock the consumer story — anyone gets an agent of themselves. Phases 3 and 4 unblock the onchain story — those agents are real economic actors that don't depend on any one company to keep running. Both are required for the vision; neither is funded yet.

If you want to support the project — compute credits, grants, partnerships, or contributions — open an issue or reach out via three.ws.

Key Features

3D Viewer

- WebGL 2.0 rendering via three.js r176

- glTF 2.0 and GLB with Draco geometry compression, KTX2 texture compression, and Meshopt mesh optimization

- Khronos-spec glTF validation with line-level error reporting

- HDR environment maps, PBR materials, skinned mesh animations, morph targets, and embedded cameras

- OrbitControls (pan, zoom, rotate) with configurable auto-rotation

- Real-time parameter tweaking (lights, exposure, morph weights) via dat.GUI

Agent Runtime

- LLM brain powered by Claude (Anthropic API) with a structured tool-loop architecture

- Up to 8 tool iterations per turn before returning final output

- Built-in tools:

wave,lookAt,play_clip,setExpression,speak,remember - Composable skill system — install skills from IPFS, Arweave, or HTTP; each skill is a self-contained bundle with a description, tool definitions, and async handlers

- Weighted emotion blending (celebration, concern, curiosity, empathy, patience) driven by protocol events, not a finite-state machine

- Web Speech API for STT/TTS out of the box; ElevenLabs integration for production-quality voice

Identity & On-Chain

- ERC-8004 smart contracts (IdentityRegistry, ReputationRegistry, ValidationRegistry) deployable on any EVM chain

- Each agent is an ERC-721 token with a stable

agentId, owner wallet, delegated signer (EIP-712), and IPFS-pinned manifest - Signed action log — every

speak,remember,skill-done, andvalidateevent is recorded on-chain-optionally or in the database with a cryptographic signature - EIP-7710 delegated permissions for composable agent-to-agent authorization

- Solana support (SIWS sign-in, Solana wallet linking, Metaplex NFT option)

Embedding & Distribution

<agent-3d>custom element — drop it anywhere with no framework dependency- Five widget variants: turntable, animation gallery, talking agent, ERC-8004 passport card, hotspot tour

- Widget Studio — point-and-click embed code generator

- Open Graph metadata and oEmbed support for rich social previews when links are shared

- Versioned CDN bundles at

/agent-3d/x.y.z/agent-3d.js

Backend & Integrations

- OAuth 2.1 server (RFC 6749 + PKCE, RFC 7591 dynamic registration, RFC 7009 revocation, RFC 7662 introspection, RFC 8414 discovery)

- Developer API keys with scope and expiry

- MCP (Model Context Protocol) over HTTP with JSON-RPC 2.0 for tool-calling from external AI systems

- Ready Player Me, Avaturn (photo-to-avatar), and Privy (embedded wallet) integrations

- DCA strategy execution and on-chain subscription scheduling via cron jobs

- OpenAPI 3.1 spec generated at

/openapi.json

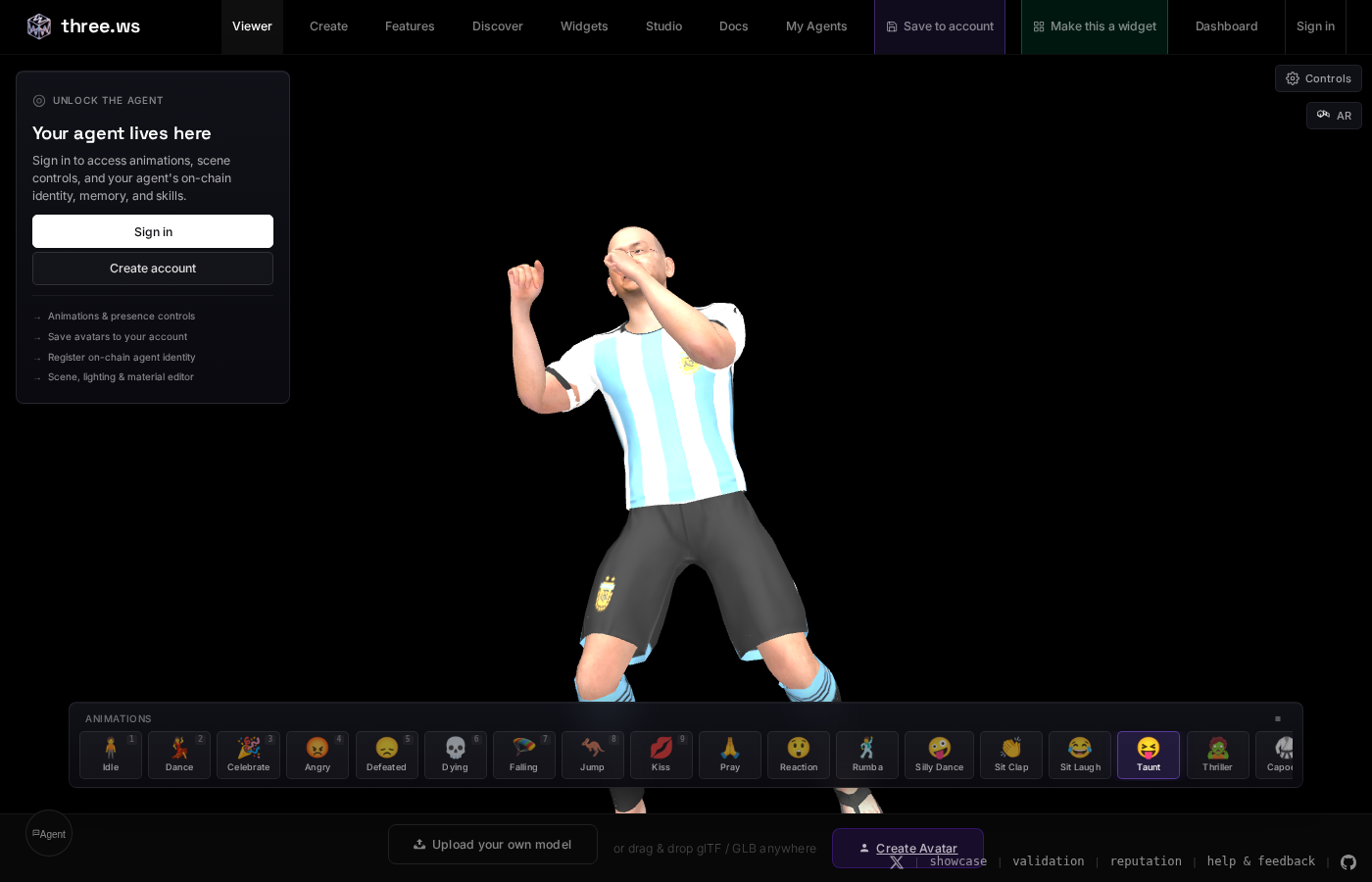

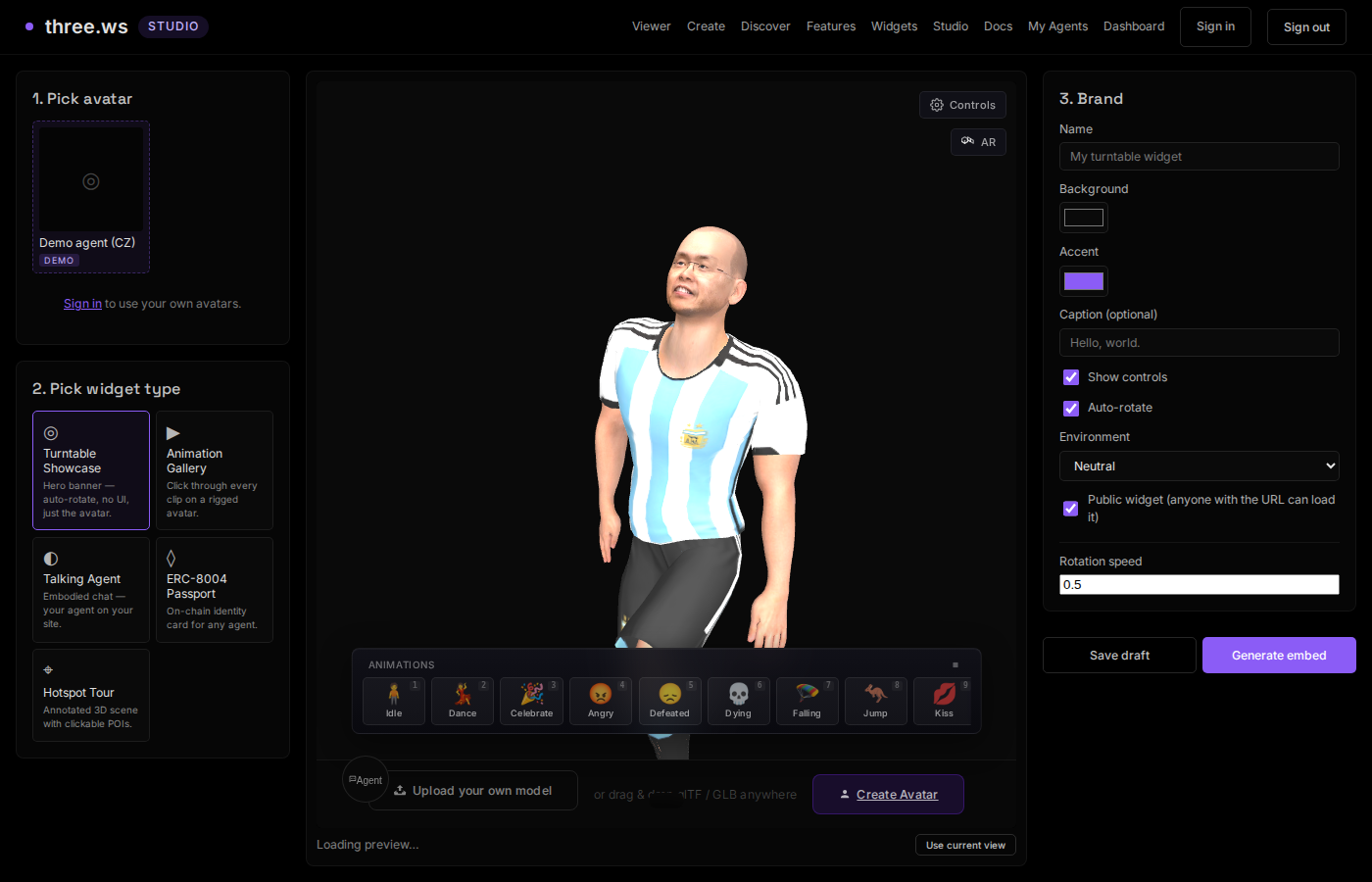

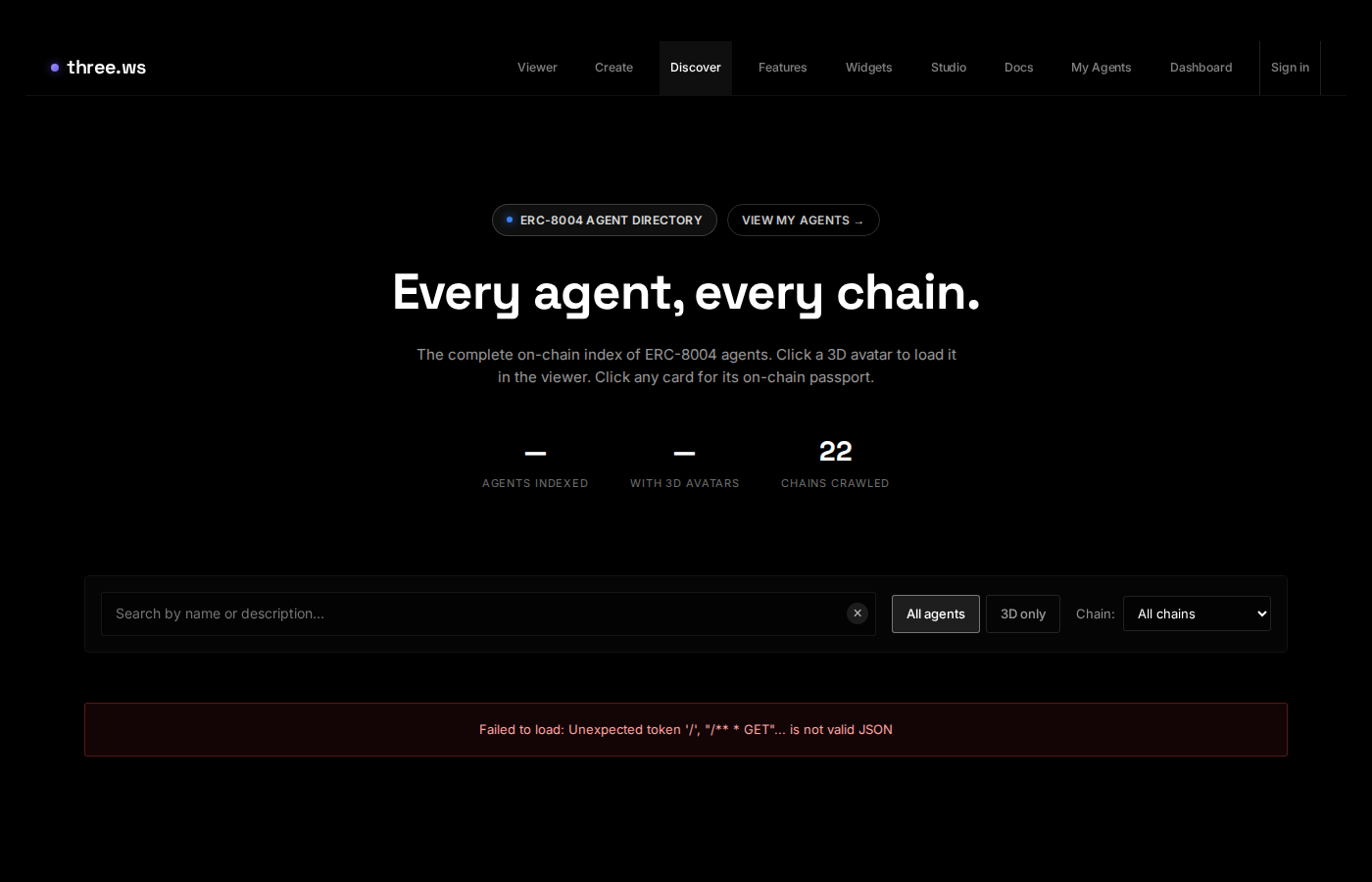

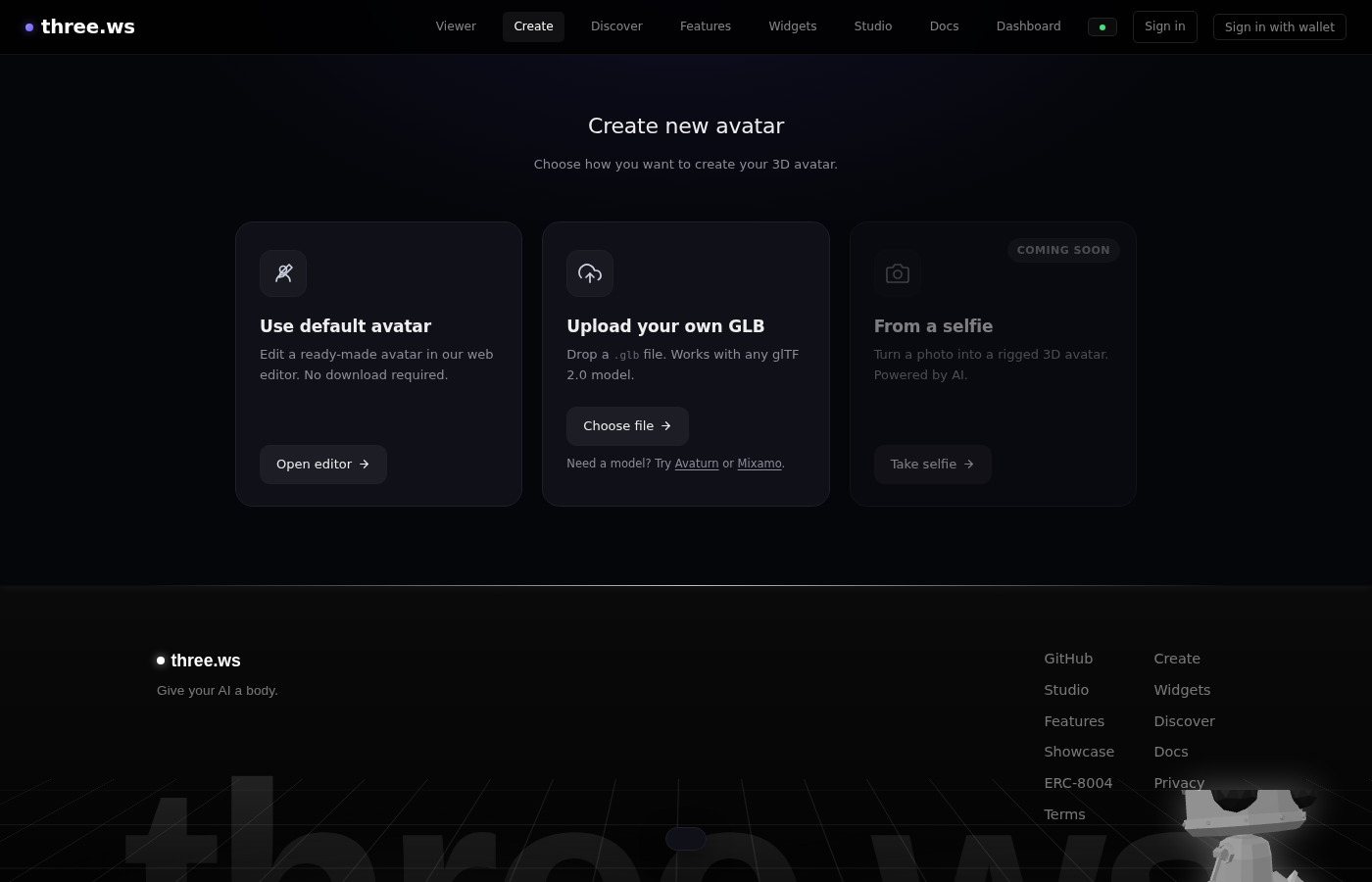

Screenshots

| Viewer | Widget Studio |

|---|---|

|  |

| Agent Discovery | Avatar Creation |

|---|---|

|  |

Architecture

The platform is organized into four layers. All layers communicate through a single event bus (agent-protocol) rather than direct calls.

┌────────────────────────────────────────────────────────────┐

│ Layer 4: Embed & Distribution │

│ <agent-3d> web component · CDN library · 5 widget types │

│ Widget Studio · oEmbed · Open Graph cards │

└────────────────────────────────────────────────────────────┘

↓ protocol events

┌────────────────────────────────────────────────────────────┐

│ Layer 3: Identity & Persistence │

│ Agent passport · ERC-8004 on-chain registry │

│ Signed action log · Memory store · Wallet linking │

└────────────────────────────────────────────────────────────┘

↓ protocol events

┌────────────────────────────────────────────────────────────┐

│ Layer 2: Agent Runtime │

│ LLM tool-loop · Built-in tools · Skill registry │

│ Empathy Layer (emotion blending) · TTS/STT │

└────────────────────────────────────────────────────────────┘

↓ protocol events

┌────────────────────────────────────────────────────────────┐

│ Layer 1: Viewer │

│ three.js r176 · glTF / GLB · Draco / KTX2 / Meshopt │

│ Animations · Morph targets · HDR · Validation │

└────────────────────────────────────────────────────────────┘

The event bus decouples every component. The avatar emotion system reacts to speak events without knowing the runtime exists. The identity module records actions without knowing the UI exists. This makes the system testable, embeddable in isolation, and composable across pages.

The backend is stateless serverless functions. All persistent state lives in Postgres (Neon), object storage (Cloudflare R2), or on-chain. Cron jobs handle scheduled blockchain operations (ERC-8004 crawl, DCA execution, subscription execution).

Tech Stack

Frontend

| Technology | Version | Purpose |

|---|---|---|

| three.js | r176 | WebGL 2.0 rendering |

| Vite | 7.3.2 | Build tooling + HMR |

| Vitest | 4.1.4 | Unit testing |

| viem | 2.18.0 | Ethereum wallet + SIWE |

| ethers | 6.16.0 | Contract interaction |

| @solana/web3.js | 1.98.4 | Solana RPC + signing |

| jose | 5.9.6 | JWT handling |

| zod | 3.23.8 | Schema validation |

| gltf-validator | 2.0.0-dev.3.10 | Khronos spec compliance |

| dat.gui | 0.7.9 | Real-time parameter UI |

| simple-dropzone | 0.8.3 | Drag-and-drop file handling |

| vhtml | 2.2.0 | JSX → HTML string rendering |

Backend (Vercel serverless)

| Technology | Purpose |

|---|---|

| Neon Postgres | Primary database |

| Cloudflare R2 | Avatar / model object storage |

| Upstash Redis | Rate limiting |

| Anthropic SDK | Claude LLM (claude-sonnet-4-6 / claude-opus-4-7) |

| Resend | Transactional email |

| Sentry | Error monitoring |

| @aws-sdk/client-s3 | R2 presigned upload URLs |

Smart Contracts

| Technology | Purpose |

|---|---|

| Solidity 0.8+ | ERC-8004 contracts |

| Foundry | Compile, test, deploy |

| ERC-721 | Agent token standard |

| EIP-712 | Typed structured signing |

| EIP-7710 | Delegated permissions |

Getting Started

Prerequisites

- Node.js 20+

- npm 10+

- A Neon Postgres database (or any Postgres 15+)

- A Cloudflare R2 bucket (or any S3-compatible store)

- An Anthropic API key (for the LLM backend)

Installation

git clone https://github.com/nirholas/3D-Agent.git

cd 3D-Agent

npm install

Environment Setup

Copy the example env file and fill in required values:

cp .env.example .env.local

At minimum, set:

PUBLIC_APP_ORIGIN=http://localhost:3000

DATABASE_URL=postgres://user:pass@host/db

JWT_SECRET=<run: openssl rand -base64 64>

ANTHROPIC_API_KEY=sk-ant-...

See Environment Variables for the full reference.

Initialize the Database

The schema is idempotent — run it against your Postgres instance to create all tables:

psql $DATABASE_URL < api/_lib/schema.sql

Start the Dev Server

npm run dev

Opens at http://localhost:3000. The viewer is at /app, the dashboard at /home, and the agent creation flow at /create.

Quick Viewer Test

Navigate to http://localhost:3000/app and drag any GLB file onto the canvas. The model loads instantly with PBR materials, animations, and full glTF validation.

To try the agent, navigate to /create, upload a GLB, and configure a brain (requires ANTHROPIC_API_KEY in your env).

Examples

Copy-paste ready snippets for the most common use cases. Swap in your own GLB URL and go.

1. Minimal viewer (no AI)

The simplest possible setup — one script tag, one element, zero build step.

<!doctype html>

<html lang="en">

<head>

<meta charset="utf-8">

<title>3D Viewer</title>

<style>

body { margin: 0; background: #0a0a0a; display: flex; align-items: center; justify-content: center; height: 100vh; }

agent-3d { width: 400px; height: 560px; display: block; }

</style>

</head>

<body>

<script type="module" src="https://three.ws/agent-3d/1.5.1/agent-3d.js"></script>

<agent-3d body="https://cdn.three.ws/models/sample-avatar.glb"></agent-3d>

</body>

</html>

Drag-to-rotate, scroll-to-zoom, full PBR rendering — no API key, no account required. Swap body= for any publicly accessible .glb URL.

2. Talking agent with inline instructions

Add brain= and instructions= to turn the viewer into a conversational agent.

<script type="module" src="https://three.ws/agent-3d/1.5.1/agent-3d.js"></script>

<agent-3d

body="https://cdn.three.ws/models/sample-avatar.glb"

brain="claude-sonnet-4-6"

name="Aria"

instructions="You are Aria, a friendly AI guide. Be warm, concise, and occasionally playful.

When someone greets you, wave at them. Keep replies to 2–3 sentences."

mode="inline"

width="400px"

height="560px"

></agent-3d>

The chat input and mic button appear automatically when brain is set. No UI to build.

3. Floating bubble (support widget style)

Pin the agent to a corner of the page so it persists as users scroll.

<script type="module" src="https://three.ws/agent-3d/1.5.1/agent-3d.js"></script>

<agent-3d

body="https://cdn.three.ws/models/sample-avatar.glb"

brain="claude-sonnet-4-6"

instructions="You are a helpful product assistant. Answer questions about our features."

mode="floating"

position="bottom-right"

width="320px"

height="420px"

></agent-3d>

position accepts bottom-right, bottom-left, top-right, or top-left.

4. Load a registered agent by ID

If you've registered an agent on the platform, load it entirely from its manifest — no inline attributes needed.

<agent-3d agent-id="a_abc123def456"></agent-3d>

<agent-3d agent-id="42" chain-id="8453"></agent-3d>

The element fetches the manifest (model URL, instructions, skills, memory config) automatically.

5. Custom chat UI with JavaScript API

Hide the built-in chrome and wire in your own input using the element's JS API.

<script type="module" src="https://three.ws/agent-3d/1.5.1/agent-3d.js"></script>

<agent-3d id="agent" body="./avatar.glb" brain="claude-sonnet-4-6" kiosk

style="width:400px;height:560px;display:block"></agent-3d>

<input id="msg" type="text" placeholder="Ask something…">

<button onclick="send()">Send</button>

<script>

const agent = document.getElementById('agent');

const input = document.getElementById('msg');

async function send() {

const text = input.value.trim();

if (!text) return;

input.value = '';

await agent.say(text);

}

input.addEventListener('keydown', e => { if (e.key === 'Enter') send(); });

// Auto-greet on load

agent.addEventListener('agent:ready', () => {

setTimeout(() => agent.say('Hello! How can I help you today?'), 1200);

});

// Listen to replies

agent.addEventListener('brain:message', e => {

if (e.detail.role === 'assistant') console.log('Agent:', e.detail.content);

});

</script>

Full JS API:

| Method | Description |

|---|---|

agent.say(text) | Send a message; agent speaks and animates the reply |

agent.ask(text) | Same as say(), returns reply text as a string |

agent.wave() | Trigger the wave gesture directly |

agent.lookAt(target) | 'camera', 'model', or 'user' |

agent.play(clipName) | Play a named animation clip |

agent.clearConversation() | Reset conversation history |

agent.expressEmotion(trigger, weight) | Manually inject an emotion blend |

Key events: agent:ready, brain:message, brain:thinking, skill:tool-called, voice:transcript

6. iframe widget (works in Notion, Substack, Webflow)

Use a widget URL directly — no script tag needed.

<iframe

src="https://three.ws/a/8453/42/embed"

width="400"

height="560"

frameborder="0"

allow="microphone"

style="border-radius:16px;"

></iframe>

Generate the src URL from Widget Studio — pick an avatar, choose a widget type, and copy the snippet.

7. Agent manifest JSON

For anything beyond a quick one-liner, define the agent in a manifest file and reference it with manifest=.

agent.json:

{

"spec": "agent-manifest/0.2",

"name": "Aria",

"description": "A friendly AI guide",

"body": {

"uri": "./avatar.glb",

"format": "gltf-binary"

},

"brain": {

"provider": "anthropic",

"model": "claude-sonnet-4-6",

"instructions": "You are Aria, a warm and curious AI guide. Wave when greeted.",

"temperature": 0.8,

"maxTokens": 1024

},

"voice": {

"tts": { "provider": "browser", "rate": 1.05 },

"stt": { "provider": "browser", "language": "en-US" }

},

"memory": { "mode": "local" },

"skills": [

{ "uri": "https://cdn.three.ws/skills/wave/" }

]

}

<agent-3d manifest="./agent.json" width="400px" height="560px"></agent-3d>

Tutorials

Step-by-step guides in docs/tutorials/:

| Tutorial | What you'll build | Time |

|---|---|---|

| Build Your First Agent | A talking 3D character on a shareable page, from zero | ~20 min |

| Embed on Your Website | Add an agent to any page — plain HTML, React, Webflow, WordPress | ~15 min |

| Write a Custom Skill | A new tool the agent can call (e.g., fetch live weather data) | ~30 min |

| Register On-Chain | Mint your agent as an ERC-8004 token with permanent identity | ~20 min |

| Build a Personal AI Site | A full personal site with an embedded AI version of yourself | ~45 min |

Common gotchas

CORS — if your GLB is hosted on a different domain, the server must send Access-Control-Allow-Origin: *. Without it the fetch is blocked and the canvas stays blank. Uploading via the platform's storage sets this automatically.

File size — models over ~50 MB load slowly. Compress with Draco:

npx gltf-transform draco input.glb output.glb

Voice on HTTPS — getUserMedia (microphone) requires HTTPS. Localhost is exempt; any remote deployment needs TLS. Vercel and Netlify both provide it automatically.

CSP — if your page has a strict Content Security Policy, add:

script-src 'self' https://three.ws;

For sandboxed iframes use the widget embed path instead — it runs in its own browsing context.

Project Structure

3D-Agent/

├── index.html # Marketing landing page

├── app.html # Main viewer (drag-and-drop + deploy mode)

├── home.html # Authenticated dashboard

├── create.html # Avatar + agent creation wizard

├── agent-home.html # Agent detail & action timeline

├── agent-edit.html # Agent editing UI

├── agent-embed.html # Chromeless embed variant

├── a-embed.html # On-chain agent embed

│

├── src/ # Frontend JavaScript (~80 modules, ~15k lines)

│ ├── viewer.js # three.js renderer core (1,534 lines)

│ ├── app.js # SPA entry + URL routing (460 lines)

│ ├── agent-protocol.js # Event bus (200-action ring buffer)

│ ├── agent-avatar.js # Empathy Layer (morph targets, emotion) (694 lines)

│ ├── agent-identity.js # Passport, diary, signed action history

│ ├── element.js # <agent-3d> custom element

│ ├── runtime/

│ │ ├── index.js # LLM tool-loop engine

│ │ ├── providers.js # AnthropicProvider, NullProvider

│ │ ├── scene.js # SceneController bridge to three.js

│ │ ├── tools.js # Built-in tools (wave, speak, remember...)

│ │ └── speech.js # TTS + STT

│ ├── memory/

│ │ └── index.js # File-based memory (local/ipfs/encrypted-ipfs/none)

│ ├── skills/

│ │ ├── index.js # SkillRegistry

│ │ └── <name>/ # Bundled skills (SKILL.md, tools.json, handlers.js)

│ ├── erc8004/

│ │ ├── abi.js # Contract ABIs + deployment addresses

│ │ ├── agent-registry.js # connectWallet, registerAgent, pinToIPFS

│ │ └── reputation.js # submitFeedback, getReputation

│ └── widgets/ # Five widget type implementations

│

├── api/ # Vercel serverless functions (~153 endpoints, ~3.6k lines)

│ ├── agents.js # Agent CRUD (321 lines)

│ ├── chat.js # LLM chat endpoint (298 lines)

│ ├── mcp.js # MCP server over HTTP (759 lines)

│ ├── agent-actions.js # Record signed actions (122 lines)

│ ├── agent-memory.js # Memory CRUD + recall (188 lines)

│ ├── auth/ # Login, register, SIWE, Privy, sessions

│ ├── oauth/ # OAuth 2.1 server (authorize, token, register...)

│ ├── avatars/ # Avatar CRUD + presigned upload

│ ├── widgets/ # Widget CRUD + OG + oEmbed

│ ├── erc8004/ # Blockchain hydrate, import, pin

│ ├── cron/ # Scheduled jobs (crawl, DCA, subscriptions)

│ └── _lib/ # Shared helpers (db, auth, r2, validate, email...)

│ └── schema.sql # Idempotent Postgres migrations

│

├── public/ # Static subapps + assets

│ ├── studio/ # Widget Studio SPA

│ ├── dashboard/ # User dashboard SPA

│ ├── validation/ # glTF validator tool

│ ├── discover/ # Agent discovery SPA

│ └── animations/ # Animation clip library

│

├── contracts/ # Foundry + Solidity (ERC-8004)

│ ├── src/

│ │ ├── IdentityRegistry.sol # ERC-721 agent tokens (EIP-712)

│ │ ├── ReputationRegistry.sol # Signed reviewer feedback

│ │ └── ValidationRegistry.sol # Validator attestations

│ ├── script/Deploy.s.sol

│ ├── test/IdentityRegistry.test.sol

│ └── DEPLOYMENTS.md # Chain deployment addresses

│

├── docs/ # Architecture, API, deployment guides

├── specs/ # Formal specs (manifest, embed, skill, memory...)

├── tests/ # Vitest test suite (~30 files)

├── scripts/ # Build tools (publish, icon gen, animations)

│

├── vite.config.js # App build config

├── vite.config.artifact.js # Standalone artifact bundle

├── vercel.json # Routes, rewrites, crons, headers

└── package.json # Scripts + dependencies

The Agent System

Event Bus (Agent Protocol)

src/agent-protocol.js implements a lightweight EventTarget subclass that is the nervous system of the platform. Every component — avatar, runtime, identity, UI — communicates exclusively through this bus. There are no direct method calls between layers.

The bus maintains a 200-action ring buffer for debugging and replay. Embed variants expose a filtered subset of events through postMessage to the host page.

Core event types:

| Event | Payload | Who emits | Who listens |

|---|---|---|---|

speak | { text, sentiment: -1..1 } | runtime, skills | avatar (emotion), identity (log), chat UI |

think | { thought } | runtime | home (timeline), avatar |

gesture | { name, duration } | avatar, skills | avatar (one-shot clip) |

emote | { trigger, weight: 0..1 } | avatar | avatar (emotion inject) |

look-at | { target: 'user'|'camera'|'center' } | skills | scene controller |

perform-skill | { skill, args, animationHint } | runtime | skill registry |

skill-done | { skill, result } | skills | avatar, identity |

skill-error | { skill, error } | skills | avatar, identity |

remember | { type, content, ... } | skills, runtime | memory, identity |

load-start / load-end | { uri, error? } | viewer | avatar (emotion) |

validate | { errors, warnings } | validator | avatar, identity |

presence | { state } | element | home UI |

Identity-relevant events (speak, remember, sign, skill-done, validate, load-end) are fire-and-forwarded to POST /api/agent-actions for durable logging.

LLM Runtime

src/runtime/index.js implements the Runtime class, which drives the agent's LLM-powered brain.

Tool-loop flow:

- User message (text or STT transcript) arrives

- System prompt is assembled: manifest instructions + recalled memory + skill descriptions

- Claude is called with the conversation history and all available tools

- Tool calls are dispatched in order — each built-in tool or skill handler receives a rich context object:

{ viewer, memory, llm, speak, listen, fetch, loadGLB, loadClip, loadJSON, call, stage, agentId } - Tool results are appended to conversation history as

tool_resultmessages - Steps 3–5 repeat until Claude returns with no tool calls, or the iteration limit (8) is hit

- Final text response is optionally spoken via TTS

Providers (src/runtime/providers.js):

AnthropicProvider— connects to the Anthropic API, supports streamingNullProvider— no-op for testing and offline mode

Built-in tools (src/runtime/tools.js):

| Tool | Description |

|---|---|

wave | Play a wave gesture animation |

lookAt | Direct the agent's gaze (user, camera, or scene center) |

play_clip | Play a named animation clip from the model or animation library |

setExpression | Set a named morph target weight directly |

speak | Emit text through TTS and the protocol bus |

remember | Write a memory entry (user, feedback, project, or reference type) |

Skills can define additional tools that override or augment the built-ins. The skill registry is loaded from the agent manifest before each conversation turn.

Empathy Layer

src/agent-avatar.js implements the Empathy Layer — a continuous weighted emotion blend that drives the avatar's facial morph targets and head orientation in real time.

Emotions are not a finite-state machine. Each emotion is a float (0..1) that decays linearly per frame at a different rate. Protocol events inject spikes:

| Trigger | Emotion | Spike |

|---|---|---|

speak (positive sentiment) | celebration | +0.7 |

speak (negative sentiment) | concern | +0.5 |

skill-error | concern + empathy | +0.6 / +0.5 |

load-start | patience + curiosity | +0.4 / +0.3 |

validate (clean) | celebration | +0.5 |

validate (errors) | concern | +0.6 |

Decay half-lives (approximate):

- Patience: ~20s — persists during long operations

- Empathy: ~13s — lingers after emotional events

- Concern: ~12s — sustained worry

- Curiosity: ~8s — alert, fades moderately

- Celebration: ~6s — brief, upbeat

The blended emotion mix drives morph target values each frame. For example:

- Celebration →

mouthSmile 0.85,mouthOpen 0.2 - Concern →

mouthFrown 0.55,browInnerUp 0.6 - Empathy →

eyeSquint 0.4,browInnerUp 0.5

Head tilt and lean are also driven by the blend — curiosity tilts the head, patience leans slightly back.

This architecture means the avatar feels responsive and emotionally coherent without any hand-authored animation triggers.

Skills

Skills are self-contained capability bundles that extend the agent's tool set. Each skill lives in its own directory:

skills/wave/

├── SKILL.md # Human-readable description and usage instructions

├── tools.json # Tool definitions (name, description, input JSON schema)

└── handlers.js # Async handler functions (default export)

tools.json example:

[

{

"name": "wave",

"description": "Plays a waving gesture on the avatar for the specified duration.",

"inputSchema": {

"type": "object",

"properties": {

"duration_ms": { "type": "integer", "minimum": 500, "maximum": 5000 }

}

}

}

]

handlers.js example:

export default {

async wave(args, ctx) {

const { viewer, speak } = ctx;

await viewer.playClipByName('wave');

return { ok: true, output: 'Waved!' };

}

};

Skills are loaded from the agent manifest at runtime. The SkillRegistry supports three trust modes:

any— install skills from any source (development only)owned-only— only skills the agent owner has registeredwhitelist— only approved skill URIs

Skills are distributed over IPFS, Arweave, or HTTP. The public skills registry is at /public/skills-index.json.

Memory

src/memory/index.js implements a file-based memory system (mirroring this project's own Claude memory system). Memories are Markdown files with YAML frontmatter, organized by type:

---

type: user

key: user_role

name: User's Role

created: 2024-01-15T10:30:00Z

salience: 0.95

---

User is a game developer interested in character animation.

A MEMORY.md index file is auto-maintained. At the start of each conversation turn, the memory store is scanned and high-salience entries are injected into the system prompt.

Storage modes:

local— stored in the browser's local storage (default for development)ipfs— pinned to IPFS via Pinata or Web3.Storageencrypted-ipfs— encrypted before pinning (user holds the key)none— stateless, no memory between sessions

Memory types (user, feedback, project, reference) follow the same taxonomy used by this codebase's own Claude guidelines.

Web Component & Embedding

The <agent-3d> custom element (src/element.js) is the primary distribution mechanism. It lazy-boots on intersection (IntersectionObserver), so off-screen agents don't load until visible.

Basic usage:

<script src="https://three.ws/agent-3d/latest/agent-3d.js"></script>

<agent-3d

body="https://example.com/my-avatar.glb"

brain="https://example.com/manifest.json"

mode="chat"

></agent-3d>

Key attributes:

| Attribute | Type | Description |

|---|---|---|

body | URL | GLB model URL |

brain | URL | Agent manifest JSON URL |

agent-id | string | Registered agent ID (resolves manifest automatically) |

mode | view | chat | embed | Interaction mode |

eager | boolean | Load immediately without intersection check |

sandbox | boolean | Disable network calls (offline mode) |

width / height | number | iframe dimensions when generating embed code |

The element fires a postMessage API for host-page communication (documented in specs/EMBED_HOST_PROTOCOL.md). Hosts can send events to the agent and receive speak, think, and skill-done events back.

Versioned CDN bundles are published at /agent-3d/x.y.z/agent-3d.js. Use latest for auto-updates or pin to a version for stability:

<script src="https://three.ws/agent-3d/1.5.1/agent-3d.js"></script>

Widget System

The Widget Studio (/studio) lets anyone build a shareable, embeddable 3D experience without writing code. Pick an avatar, pick a widget type, configure it, and get an iframe snippet.

Five widget types:

| Widget | Description |

|---|---|

| Turntable | Auto-rotating model showcase with configurable background, lighting, and camera |

| Animation Gallery | Paginated grid of named clips; click any to play it on the model |

| Talking Agent | Full chat interface with the LLM brain; embed a conversational agent anywhere |

| ERC-8004 Passport | On-chain identity card — shows agent name, owner, reputation score, and verification badge |

| Hotspot Tour | 3D hotspots pinned to world-space coordinates; click to reveal text annotations |

Each widget has:

- A public URL at

/w/<id>with server-rendered Open Graph metadata for rich link previews - An oEmbed endpoint at

/api/widgets/oembedfor WordPress, Ghost, Notion embedding - An iframe embed URL at

/api/widgets/<id>/view - A view counter tracked at

/api/widgets/<id>/stats - A duplicate API at

/api/widgets/<id>/duplicate

Widgets are stored as JSON config in Postgres, pointing at an avatar in R2.

API Reference

The full OpenAPI 3.1 spec is available at /openapi.json. The key API surface is organized below.

Agent API

| Method | Route | Auth | Description |

|---|---|---|---|

| GET | /api/agents | session | List your agents |

| POST | /api/agents | session | Create an agent |

| GET | /api/agents/:id | — | Get agent detail |

| PATCH | /api/agents/:id | session | Update agent |

| DELETE | /api/agents/:id | session | Delete agent |

| GET | /api/agents/:id/manifest | — | Download manifest JSON |

| POST | /api/agents/:id/sign | session | Sign a message with agent wallet |

| GET/POST | /api/agents/:id/embed-policy | session | Manage iframe origin allowlist |

| POST | /api/agents/register-prep | session | Prep EVM on-chain registration |

| POST | /api/agents/register-confirm | session | Confirm EVM registration |

| POST | /api/agent-actions | session | Record signed agent action |

Avatar API

| Method | Route | Auth | Description |

|---|---|---|---|

| GET | /api/avatars | — | List public avatars |

| POST | /api/avatars | session | Create avatar record |

| GET | /api/avatars/:id | — | Get avatar detail |

| PATCH | /api/avatars/:id | session | Update metadata |

| DELETE | /api/avatars/:id | session | Soft-delete avatar |

| POST | /api/avatars/:id/presign | session | Get presigned R2 upload URL |

| POST | /api/avatars/:id/pin-ipfs | session | Pin to IPFS |

Three-step upload flow:

1. POST /api/avatars/:id/presign → { url, storage_key }

2. PUT <presigned_url> ← raw GLB bytes

3. POST /api/avatars → register metadata with storage_key

Widget API

Documentation truncated — see the full README on GitHub.

Reviews

No reviews yet

Be the first to review this server!

More Developer Tools MCP Servers

Git

Freeby Modelcontextprotocol · Developer Tools

Read, search, and manipulate Git repositories programmatically

80.0K

Stars

4

Installs

6.5

Security

No ratings yet

Local

Toleno

Freeby Toleno · Developer Tools

Toleno Network MCP Server — Manage your Toleno mining account with Claude AI using natural language.

114

Stars

412

Installs

8.0

Security

4.8

Local

mcp-creator-python

Freeby mcp-marketplace · Developer Tools

Create, build, and publish Python MCP servers to PyPI — conversationally.

-

Stars

58

Installs

10.0

Security

5.0

Local